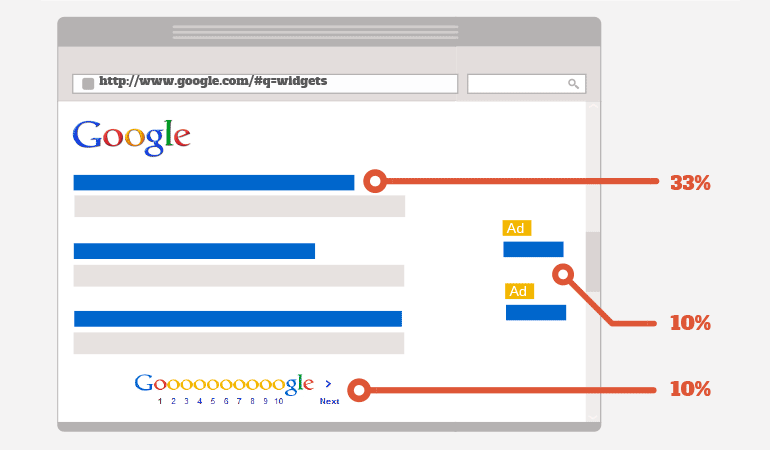

90% of people don’t scroll past the first page of results on their respective search engines, which means everyone wants to be visible there. So now search engine optimization (SEO) is as critical as ever for websites looking to rank better, thereby increasing the chance of someone clicking on their page. SEO is not to be overshadowed by third party eCommerce platforms or Points Per Click(PPC), it is just as important to optimize your site, as it is to optimize a product on Amazon.

Here are a few basics of technical SEO tips every website should review:

Onsite Review

When doing an overview of your website’s onsite SEO, it means making sure your website pages, titles, tags, and etc., in other words, the overall structure, is optimized for all the keywords you’re targeting.

Here’s a list of things you want to make sure that are all present and optimized correctly:

Content

Your content is what gives your readers/customer/viewers an instant impression of what your company is about. If you’d land on a site with horrible spelling and grammatical errors you would not take that site seriously. You might even leave the site after realizing how bad everything is written.

Also, outside of your viewers, having high-quality content will also help you rank higher in search engine results.

When you’re writing content for your site, you want to always include important keywords but only in context, but also where it is appropriate to do so. This will help increase your chances in users finding your web pages via search engines. Never over-use keywords or stick them where they generally don’t belong. In the online marketing industry it’s known as keyword stuffing, and not only will your users not be able to understand your content, it will also trigger penalizations from search engines since they’ll think it’s spam. Here’s an example:

Looking for the best place to buy cheap comfortable office chairs? We have cheap comfortable office chairs here for sale. Here on our website, you’ll find all of the best cheap comfortable office chairs across the web. Check out our inventory of cheap comfortable office chairs now!

Metadata

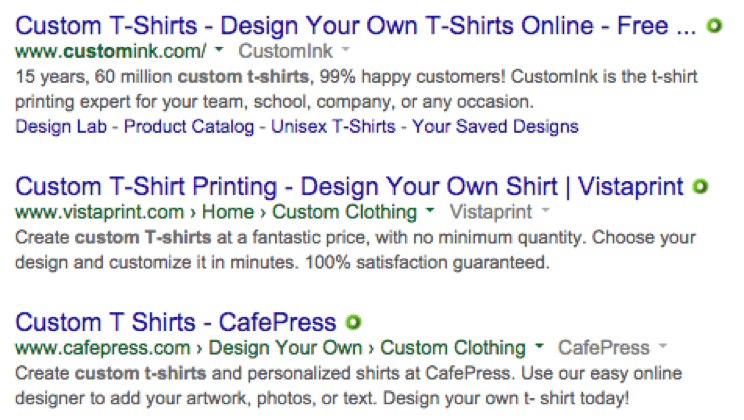

Meta titles and descriptions are the short summaries that search engine crawlers use to display on search result pages. It is the first thing people see when they run a search. It’s obvious why they need to be relevant and informative. Furthermore, they must also be descriptive of the content on the page and help the user decide if your search result is what they’re looking for.

Meta titles should not exceed more than 60 characters or so and meta descriptions should not exceed 160 characters. This is the maximum amount of text that will show on a search result page, so optimizing these in the available space is ideal.

Avoid repeating meta titles and descriptions, stuffing keywords, and adding your store name at the end of every single one. This can actually do more harm than good from a search engine optimization perspective. Every product should have a unique meta title and description that is relevant to the particular characteristics of that product.

If you’re clever with Microsoft Excel, it is possible to bulk edit and optimize meta titles and descriptions using formulas. This is especially useful when there are many variations of what would be considered one product.

Here are some examples of good and bad meta title descriptions:

Good Meta Title:

God Bless America T-Shirt – Red/Black – Striped

Bad Meta Title:

God Bless America Cheap T-Shirts, Custom-T-Shirts, Patriotic T-shirts |Yourstore.com

Good Meta Description:

God Bless America T-Shirt with Red/Black Stripes. XS. Made in the USA, 100% pre-shrunk cotton.

Bad Meta Description:

Made in USA Cotton T-Shirts.100% Cotton. Cheap t-shirts, custom t-shirts, patriotic t-shirts, custom t-shirts, Made in America. Best t-shirts, affordable t-shirts, great patriotic t-shirts.

Keeping your meta titles and descriptions, clean, descriptive, and unique is the best practice.

URL Structure

Depending on your content management system (CMS) or eCommerce platform, the URLs you have that are automatically generated may already have a great structure to them like this one: www.example.com/topic-name

If not, and your URLs seem to look like this: www.example.com/?p=578544 then you need to go into your CMS’s settings and have it generate SEO friendly URLs.

Robots.txt

If you have one, find yours at (www.example.com/robots.txt)

In Google’s Help pages it states:

A robots.txt file is a file at the root of your site that indicates those parts of your site you don’t want accessed by search engine crawlers. The file uses the Robots Exclusion Standard, which is a protocol with a small set of commands that can be used to indicate access to your site by section and by specific kinds of web crawlers (such as mobile crawlers vs. desktop crawlers).

It works likes this:

A robot wants to visit a website URL. For example, http://www.example.com/welcome.html.

Before it does so, it firsts checks for http://www.example.com/robots.txt, and finds:

Where you see “User-agent: *” it means this section applies to all robots crawling the site. The “Disallow: /” tells the robot that it should not visit any pages on the site since the root “/” has been added in the field.

Note: You don’t ever want to have your entire site disallowed by the robots.txt file since it will tell all search engine bots not to crawl your site.

Now, there are two important things you must also consider when using a robots.txt file:

- Since most people think that the robots.txt file is a secure way of protecting their site, malware bots, email harvesters, and spam web crawlers can always ignore it, bypass it and continue to crawl your site.

- Also, since the /robots.txt file is a file readily available to the public, anyone can see what sections of your server you don’t want robots to access.

Canonical URLs

What is a canonical URL?

Matt Cutts, the former head of the web spam team at Google, explains it fairly well:

Canonicalization is the process of picking the best URL when there are several choices, and it usually refers to home pages.

For example, many people using the Internet today would consider these to be the same URLs:

- example.com

- example.com/

- example.com/index.html

Technically all of these URLs are different. The reason being is that a web server could return completely different content for all of the URLs above. When a search engine sees that you’ve placed a canonical URL on the place, it will then have all the URLs from that group indexed as one page on their search engine results pages (SERPs).

So for example, if all the above URLs pointed to your homepage, all you’d have to do is set the canonical URL as www.example.com and when the search engines’ bots crawl your site, they’ll return saying that the group of URLs above is all the same page (www.example.com).

SSL certificate

On Wednesday, August 06, 2014 Google announced that adding an SSL certificate to your site will give you a minor ranking boost within the overall ranking algorithm.

The primary reason is because SSLs are used is to keep sensitive information that gets sent across the Internet as unreadable and makes sure that only the intended recipient can understand it.

This is important because once you submit your information across the Internet, it is passed from server to server before it reaches its destination. So any servers between the sender and recipient will have been able to see very sensitive information such as credit card numbers, usernames, and passwords if it wasn’t encrypted with the SSL certificate.

When an SSL certificate is used, the information then becomes unreadable to everyone except the intended server you have sent the information to, which in turn protects it from hackers and identity thieves.

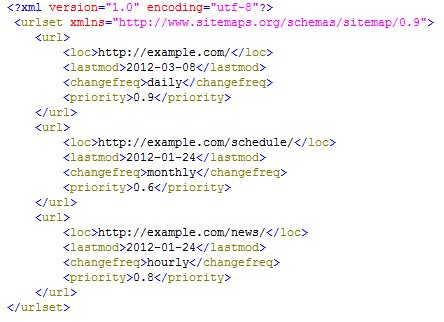

Sitemap

We’ve saved the best for last. One of the most important things you can have on your website is a sitemap.

You may be thinking, what is a sitemap? Wikipedia states:

A site map (or sitemap) is a list of pages of a web site accessible to crawlers or users. It can be either a document in any form used as a planning tool for web design/web page that lists the pages on a web site, typically organized in hierarchical fashion.

The sitemap is one page that organizes your entire website in one file, it is like the table of contents in a huge book. The sitemap is what search engine crawlers use to go through your entire site.

Without the sitemap, the bots would have to crawl your pages going from link to link and indexing the valuable pages needed, but that process takes a long time even for the split second fast way the Internet works nowadays.

The sitemap provides the bots with direction and an outline of your entire site from where to begin crawling and where to end.

Many websites this day and age now have a sitemap file that’s automatically created at “/sitemap.xml” but if your site does not contain a sitemap, it is critical you get one now.

There are many different ways to get a sitemap created depending on the content management system (CMS) or eCommerce platform you’re running. Here’s a list of just a few ways to creating a sitemap for your site.

WordPress: You can install the Yoast SEO plugin it offers a wide variety of SEO functionality for your site, including an auto-generated sitemap.

Volusion: Go into your settings and enable your SEO settings. You can follow the instructions here: https://support.volusion.com/hc/en-us/articles/210096027-SEO-Features

Manual: Worst case scenario, after you’ve looked around and you’ve seen that there’s no plugin out there to auto-generate a sitemap for your CMS/eCommerce platform, or your site was custom built from the ground up with no platform — then you can have this tool crawl your site and generate the “.xml” sitemap file. http://www.check-domains.com/sitemap/

Note: Make sure the sitemap is created as an “.xml” file and is added to the root of your site so it follows: www.example.com/sitemap.xml

Off-Site Review

Dealing with off-site SEO is a little harder and requires ongoing maintenance. Major search engines are always looking to see if other sites are always linking back to your site, they use that as an indicator of good content. The more sites the search engines see are linking back to your site, the more valuable they tend to think your content is.

At least, that’s what they think.

In the years prior, this search engine measurement was sourced for many black-hat backlink schemes. Instead, search engines today are looking for reputable links. For example, if INC.com and Wired.com have backlinks to your site, then your domain authority will bump up which will help increase your rankings across the search engines, increase visitors, and in turn, increase sales.

Domain Authority

Domain authority is a score based on a 100-point scale developed by Moz that predicts how well a website will rank on search engines. Use domain authority when comparing one site to another or tracking the “strength” of your website over time. We calculate this metric by combining all of our other link metrics—linking root domains, the number of total links, MozRank, MozTrust, etc.—into a single score.

To determine domain authority, we employ machine learning against Google’s algorithm to best model how search engine results are generated. Over 40 signals are included in this calculation. This means your website’s domain authority score will often fluctuate. For this reason, it’s best to use this tool as a competitive metric against other sites as opposed to a historic measure of your internal SEO efforts.

Backlinks

Backlinks are the relationship amongst pages on the Internet that allow users to navigate from a given point on the internet to the page you are looking at within your browser.

Backlinks play one of the biggest roles in determining your page’s domain authority. Many people might think of this as just “links” rather than “backlinks” and this is true – but “backlinks” describe the links coming into a web page or document while “links” generally refer to the outbound links from the page itself.

It is a big focus for those looking to get on the first page of Google. Without going in-depth with all the strategies that can be used to gain backlinks, here’s a list of creative places you can use to get one:

- Create a video and place it on:

- YouTube

- Vimeo

- Digg

- Change the video into audio format and share it on:

- Soundcloud

- Bandcamp

- Audioboo

- Transcribe the video and place the content on your blog then have it shared through:

- Create an engaging infographic and share it on:

- Convert the infographic into a slideshow and upload it to:

- Slideshare

- Prezi

- Issuu

- Create an eBook and share it on:

- Issuu

- Scribd

- Google Books

- Project Gutenberg

Theses are just a few of the ideas you can do to get backlinks to point to your site.

Google Analytics / Webmaster Tools

Hard data is what lies at the heart of search engine optimization. Analytics platforms such as Google Analytics give us tremendous insight into the traffic visiting our website and what they’re doing.

While Google’s Search Console, formerly known as Webmaster Tools, serves as the hub for the assessment of a website’s basic SEO status. Setting up Search Console for your website is a critical step for assessing your basic search engine optimization health.

There are many different websites including Google that gives you all the knowledge necessary to increase your analytics and webmaster tools. Here we’ll focus more on Google’s Webmaster tool.

After setting it up, submit the sitemap you created or the one that has been generated by your site and let Google’s bots crawl your web pages. After a couple days, come back and review your webmaster tools data.

You want to:

- Check to see if you have any error messages

- Check for crawl errors

- 404s

- DNS Errors

- Other

- Review all HTML improvements

- Duplicate meta descriptions

- Long/Short meta descriptions

- And any errors in the title tags

BONUS: Local SEO

Local SEO follows the same exact strategies we’ve stated above in the SEO section. After implementing everything we’ve outlined above, you’ll begin to see some significant growth in visitors, which in turn, will increase your sales.

Reviews

Your clients post feedback and reviews on websites such as Yelp and Google Reviews. You should only ask for this if you’re confident about the products or services that your business offers and know most customers are satisfied. These web sites tend to pop up when someone searches your local business name on Google and that can be a huge boost for visibility and build trust with the user.

Micro-Data

Microdata is HTML code that helps search engine crawlers interpret the information on web pages. Conversely, Google may then be able to use the supplementary information provided by the microdata to automatically add elements to your website on search result pages. This makes you stand out against your competitor’s websites and improves the user experience by providing more information about your business right on the search results page.

The method of implementing microdata data can vary depending on the platform and involves having, at least, basic HTML knowledge. If you’re using WordPress, see if your template is microdata friendly. There also may be plugins that can assist you in integrating microdata with your website.

Backlinking Opportunities

Local backlinking is another powerful strategy that can be used to enhance your local search presence. This mainly includes acquiring backlinks that benefit your site locally. Some of the sites below are business directory or credibility sites, but they help build up your local link juice. It’s important to note that you should only stick to reputable directories and websites when acquiring backlinks for local SEO purposes. If not, you could end up doing more harm than good. Make sure to keep critical business information such as business hours and a recognized logo up to date on all of these local sites.

Here’s more information about different methods of gaining high-quality local backlinks:

- Google Plus

Every business should have a claimed Google Business Page that they have control of. If you search for your business name on Google and your Google Business Page comes up but you have no control over it, you need to claim it. This is done by requesting for Google to send a postcard to the address on the listing with a verification code that you must enter yourself to claim the profile. Customers may also leave reviews on your business page.

- Yellow Pages

Having an updated Yellow Page listing is also important. The site boasts a high domain authority and many people use it as a resource when searching for local businesses.

- Yelp

This is yet another method for your clients to be able to leave reviews for your business. Ask your clients to leave feedback on review websites such as Yelp and your Google Plus profile. You should only ask for these if you’re confident about the products or services that your business offers and know most customers are satisfied. These web sites tend to pop up when someone searches your local business name on Google. These reviews can be a huge boost for visibility and building trust with the user.

- Schools and Nonprofits in Your Community

If you partner, sponsor, or work with nonprofits and schools in your community, ask them if they can provide a link to your website. If you haven’t done this already, create an execution for doing so. The high-quality back links you can receive from .edu and .gov sites are worth the effort!

- Business Partners

Take advantage of any businesses you’ve partnered with. Ask them to provide a backlink to your website and you can do the same for them in return. Building these local business links will also increase your visibility and credibility.

- Local Media Outlets

Sometimes ending up on a local media outlet website happens on its own. Hopefully, it’s in a positive light. Just by being a model business in the community, you might earn a local media spotlight and get a mention or a link from another business’s’ page. If you’re interested in being a little more aggressive about this, you can inquire about the kinds of opportunities they offer to have your business mentioned or linked to online.

Contact Us

SEO is not the same as it was, it is the dawn of a new era and search engines are cracking down on spammers and hackers. New ideas are what’s needed to optimize a site. There are many things that can be done, but no one ever knows where to start first and we hope this article covered it.

Do you have questions or need help? Consult one of our professionals who’ll be able to assist you in setting up, optimizing, and maintaining your site in 2016 and beyond!